If you build on a modern LLM API, you are building on a dependency that can change your product without your consent. That statement sounds dramatic, yet most developers already live it. You wake up to a support ticket that says the assistant feels off today. You check your prompts, your retrieval, your tools, and your logs. You find nothing in your code, because the change often happened upstream.

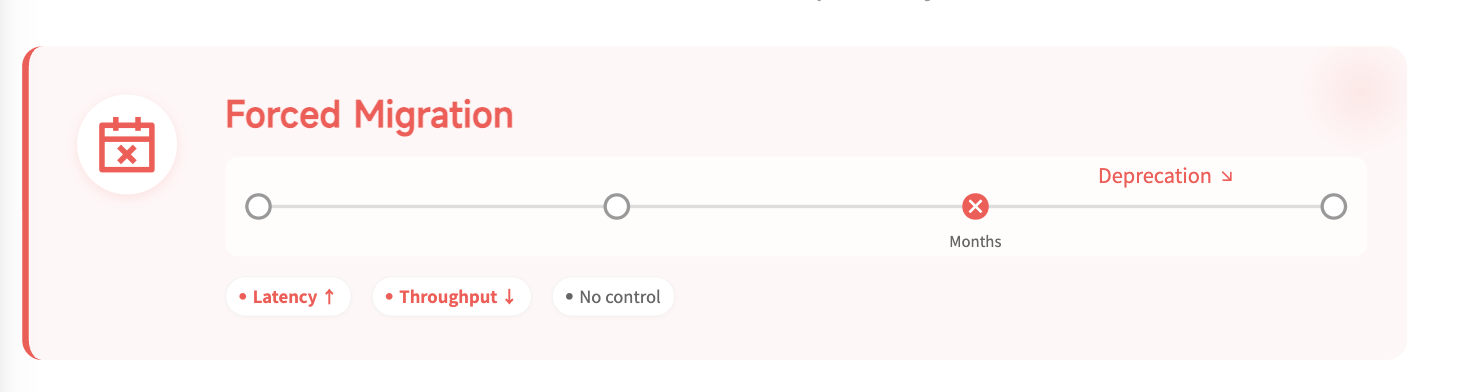

The first pain is forced migration.

Provider release cadence keeps accelerating, and sunset windows keep shrinking. A model you tuned your product around can disappear in months. Even when a model remains available, it often gets deprioritized relative to the newest flagship. Latency creeps up, throughput tightens, and your user experience shifts in ways you cannot explain. The worst part is that you cannot schedule this risk, because you do not control the provider timeline.

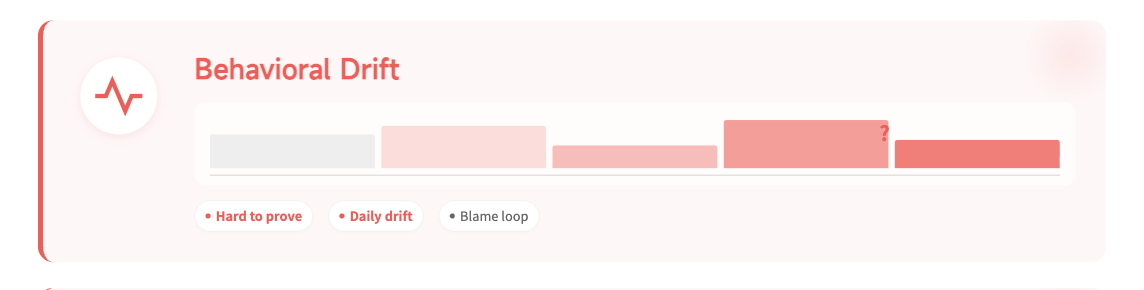

The second pain is behavioral drift you can feel but not prove.

Developers talk about the month where outputs got worse because that is how it shows up. Your agent starts missing obvious details in the same workflow. Your sales assistant changes tone and triggers user distrust. Your tool degrades and produces brittle actions that require manual cleanup. The problem is not only model swaps. Routing changes, quantization changes, inference engine updates, and optimization changes can all change observed behavior.

These silent shifts create a debugging culture that looks like argument instead of evidence. One teammate blames prompts, another blames retrieval, and a third blames user error. Customer support blames engineering, and engineering blames the provider. You may run an internal benchmark, but your benchmark quickly becomes expensive. You also cannot run it continuously, because production drift can happen daily.

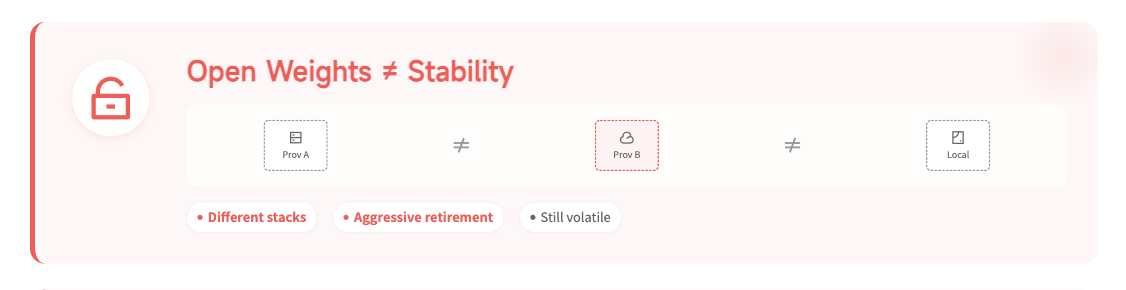

Open weights rarely solve the operating problem by themselves.

Many developers try to escape closed providers by choosing open models served through aggregators. The result is often a different kind of volatility. Providers juggle many models, then retire them aggressively to manage cost and capacity. The same model name can behave differently across providers because inference stacks differ. Benchmarks show large gaps between providers serving the same open model. You also inherit an opaque set of trade-offs around batching, caching, compression, and attention implementations.

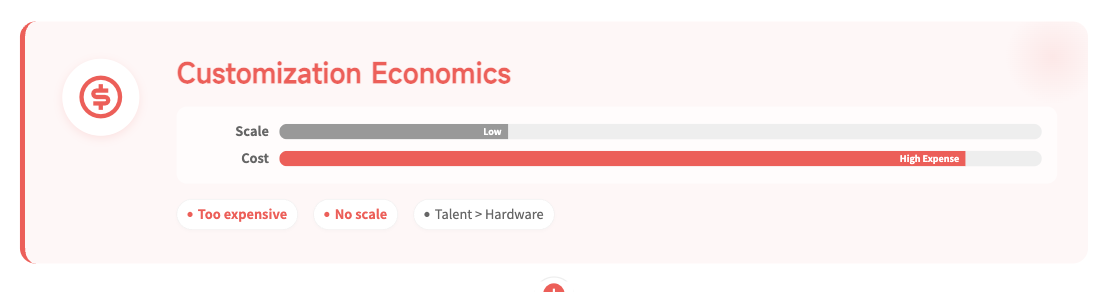

Customization is still expensive, and fallback architectures carry their own cost.

Fine-tuning sounds like the logical answer for stable behavior. In practice, hosted fine-tunes often cost too much for a normal team. Running your own GPUs rarely makes economic sense at small scale. Hardware depreciates quickly, while workload requirements shift quickly. You also need people who can run the stack, and talent cost can dominate hardware cost. Without multi-tenant scale, you cannot match the unit economics of large providers.

Some teams build multi-provider fallback, which looks safe on paper. In reality, it can switch the brain of your product mid-conversation. The experience can feel jarring because style, refusal behavior, and tool use differ. Debugging also becomes harder because every provider has different failure modes. Other teams constrain the model to narrow JSON outputs to reduce risk. That can help reliability, yet it also limits what you can ship competitively.

Enterprise contracts sound like the mature way to buy stability, privacy, and support. They often function like comfort language rather than enforceable protection. You rarely have visibility into how the provider handles your data. You also rarely have leverage during an outage that harms your business. Litigation takes years, and legal costs can exceed the damage you suffered. Even if you win, your product may not survive the timeline.

Observability has to come before truth checks.

The common thread across all of these pain points is missing observability. Developers do not need the model to be correct, because correctness is a separate layer. Developers need to know what ran, how it ran, and what changed since yesterday. That is the minimum contract required for serious engineering. Identity must mean more than a marketing model name. Identity must include a pinned version, a pinned quantization, and a pinned execution profile.

Once you have that base, you can finally separate integrity from truth. Integrity asks, did the system run as expected? Truth asks, is the output right for this task? A verified run can still produce a wrong answer, because hallucinations remain possible. RAG can still retrieve the wrong passage, and agents can still misuse tools. Serious teams still need evaluation sets, regression tests, tool guardrails, and monitored drift metrics. The difference is that truth checks become easier when execution becomes observable.

A practical developer stack follows a clean order. First, you prove the run through strong identity and execution evidence. Second, you validate important outputs using tests that reflect your domain. Third, you monitor drift through metrics that you can act on. This order matters because it reduces the number of unknown variables. It is the difference between engineering and superstition.

This is where Ambient's pitch becomes concrete for developers. The claim is not that you should trust a new vendor blindly. The claim is that you should demand a stable quality bar and a stable execution contract. Ambient positions itself as a system where model identity, quantization, and inference characteristics stay pinned and inspectable. The result is less surprise throttling, less unprovable drift, and a foundation you can actually debug.

If you want a one-sentence takeaway: you do not have an API when behavior shifts silently. You have a lease on someone else's decisions. When the lease changes, your product changes too. The fix starts with observability, then stability, then truth checks on top.